14 July 2014 By Kate Nightingale

We live in an increasingly digital world. We work, shop and play digitally most of the time or at least the digital device is used at some point during these activities. Moreover, the form of marketing being used to reach the majority of consumers is increasingly digital marketing as countries all over the world have access to internet whether via computer or a mobile device. And that number is only going up.

Basically most of our daily activities are facilitated, shared by or experienced with some type of digital device. The crucial word in here is ‘EXPERIENCE’. We all search for meaningful, intriguing or shocking experiences every day of our lives. Whether it’s sipping cafe au lait in a romantic cafe in Paris, watching a chick flick with your girlfriends and running out of tissues, or meeting your new love for the first time. All these experiences have one thing in common: they are multi-sensory. The smell of that freshly brewed coffee, the warmth and complexity of that first taste, the view of Eiffel Tower, the passion and musicality of French language…

Feeling like jumping on a Eurostar for a quick Paris experience? Now imagine that you can have all that in a comfort of your home. I know, it probably won’t feel as romantic and extraordinary as in real life but it certainly will be possible in not too distant future.

Scientist are heavy at work developing technologies that will allow you to transfer smells, tastes and textures digitally or even at some point create an augmented/virtual reality of a Paris cafe with all those sensations available for you. But they are also teaching computers how to see, smell or develop nutritious and healthy tastes with goal of improving our lives.

One of the better developed areas of research is on seeing. There are already plenty of programmes available that can, for example, read our emotions while we watch an advert so the advertising executives know whether the ad they have produced will have a desired effect. One of these programmes is the FaceReader developed by VicarVision which also has been recently introduced for online use. Another exciting project of VicarVision is ‘Empathic Products’ using emotion recognition to, for example, personalise digital signage and adverts in shopping centres.

How about social media analytics and consumer insight? As we share more and more visual content and less text, the need for analysing our likes and dislikes based on the photos we share became urgent. Fortunately companies like Curalate have developed the software to help companies gain useful insight from visual content or allow them to send personalised offers based on the photos people share via Instagram.

But these are not the most exciting developments. Much more intriguing and perhaps slightly shocking technologies are being developed to help us touch, sniff and taste digitally.

We already have various vibrations on mobile devices to let us know when we perform certain functions. Notice the difference in vibrations when you press the keyboard to when you receive a text or tweet? This is nothing! Soon we will be able to feel textures of fabrics and other materials via the use of ‘microscopic’ vibrations send to our mobile devices.

Imagine shopping online for a dress and being able to feel the textures of the fabric it is made of. Or looking at an advert of a jumper on a train station and being able to touch it and obviously buy it instantly. Or think about the possibilities for B2B market – buyers being able to check the texture and quality of the product virtually before ordering thousands of items to sell in their stores. And how about feeling the temperature or the climate via your phone? This will add a completely another dimension to booking travel and, who knows, maybe even virtual travel. Virgin Holidays opened last year a real-life version of such experience, ‘sensory holiday laboratory’ as they called it, last year in Bluewater where you can stand on a sandy beach, smell the sea and take photographs to share on your social media. Now imagine the same experience in your living room…

The area of research which is working on making it possible is called HAPTICS, as in haptic (touch) perception. One of the experts in the field is Katherine Kuchenbecker who runs the Haptics Group in the University of Pennsylvania. In this short video she explains some of the research the group is working on and introduces the term Haptography, a photography with haptic qualities. How about Instagraming or Tweeting a picture of a cat that you can actually stroke?! Oooh!

IBM Research lab is yet another institution working on developing such technology. They explain that at the beginning it will take a form of a dictionary with, for example, silk having a specific vibration definition that a company will be able to use to represent the fabric they used. However, eventually we will be able to touch digitally in real time.

Immersion Corporation, founded in 1993, is a pioneering company in the use of haptics to enhance digital experience. They are developing some really interesting technologies for mobile, gaming and even films and sport. They have, for example, created an engine that automatically translates the audio in the game to haptic feedback. They are also working on applying this to video content such as advert, action movies and sporting broadcast. How would you like feel like you’re on the field during the World Cup Final?! Soon it will be possible.

It all sounds ‘haptastic’ but why would companies invest in that? Immersion Corporation actually did some research on that and found that content with haptics in it increased the viewer’s level of arousal by 25%. From consumer psychology we know that arousal and pleasure are the key motivators to purchase so imagine the effects of the haptic content on your sales figures.

They have also tested a metric used commonly in streaming video called quality of experience. They asked participants to watch 5min long content and divided them into three conditions: no haptics, haptics reflecting the subwoofer experience, and haptics adding to the story-telling. They found that quality of experience was 10-15% higher in subwoofer haptics condition and between 25-30% higher in narrative haptics condition as compared to no haptics content. See more of their research here.

So soon we will be able to touch the dress before we buy it but how about buying perfume or other cosmetics online? Not to worry! Digital scent messaging is already here.

A new invention called oPhone has been just introduced to the market. It allows you send scent messages and even create your own scent impressions. There is also an IPhone app called oSnap which allows you to create sensory oNotes which you can share with your friends. However, to be able to actually smell your creations, your friends will either have to have the oPhone or go to one of the HotSpots, currently only available in Paris and New York. One of the founders Dr. David Edwards says that the scent vocabulary is at the moment limited to some food-related smells but it’s only a matter of time before we will be able to watch a movie and smell the beach we see.

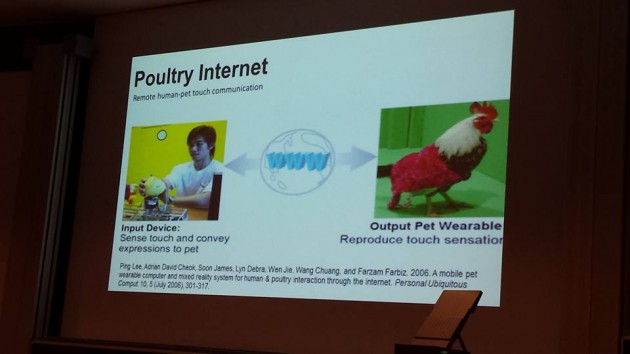

Another inventor in the field is Dr. Adrian Cheok, founder of Mixed Reality Lab in Singapore and professor of pervasive technology in the City University London. He and his team invented a small device called Scentee which you can attach to your smartphone to send various smells to your friends and family. However, you need to have separate cartridge for each smell and the scent vocabulary is currently limited.

Dr. Cheok also works on digital taste, an ability to send tastes via internet and mobile devices. He presented his work last month on the event called the Circus for the Senses that took place in the Natural History Museum during the Universities Week. It certainly had a great reception. Who wouldn’t want to watch their favourite chef preparing a delicious raspberry Pavlova and be able to taste it immediately. I’m sure you will get up right this second and run to buy or make it. No, by then you will be able to press a button on your TV or mobile and it will jump out of the screen onto your table! I know, maybe slightly farfetched but totally possible within I guess about 10 years.

So now we are impressed when we can download movies and music via our mobile or purchase our groceries. In 5 years we will have all these amazing gear available allowing us to sniff, taste and touch what you see on your screen.

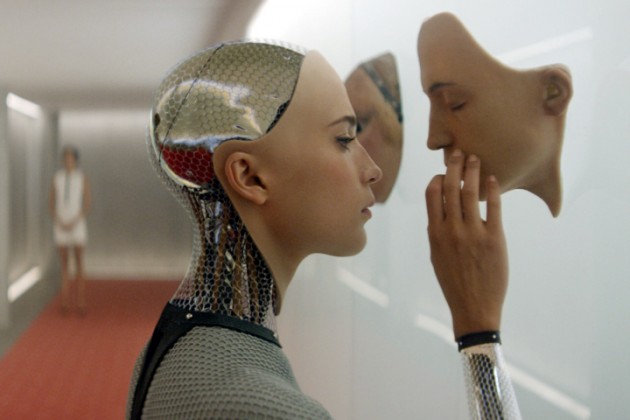

However people will still want an experience and social connections. This is where augmented reality or virtual shops and other venues will come into play. Brands will be able to have virtual shops which people can visit from a comfort of their home. I’m not talking about using avatar but to be actually immersed in the multi-sensory virtual brand experience. So you will be able to walk through the virtual shop, touch the merchandise, smell it and even try it on. Imagine the possibilities for the company to personalise this experience to each individual with a touch of a button! Oh, sorry! This will be automated with the state-of-the-art software!

And how about applying such technologies as Face Reader that can read our emotions and other programmes reacting to our biological functions like heart rate and level of arousal to adjust this virtual experience? For example, the computers will be able to see disgust or other unpleasant emotion on your face and attribute it to a smell you perceived. That will allow a retailer to change this olfactory experience to a positive one instantly.

And how about online dating? We will be able to sniff pheromones adding a completely different dimension to an idea of love at first sniff.

Do you know of the Secret Cinema? These are very secretive events where you can truly experience certain movies by being inserted into a specially created set. Imagine now that you can do it from a comfort of your couch. It’s going to be kind of like 3D with added touch, temperature, scent and taste sensations. It will make you feel like you’re a part of the action and, who knows, maybe even insert yourself into a plot. That’s a true co-creation!

Dr. Cheok certainly shares that view as represented in his comment for CNN article: ‘the ultimate direction of goal is a multi-sensory device unifying all five senses to create an immersive virtual reality, and could be usable within five years’.

Of course, before this technology becomes widely available and affordable, companies need to create immersive and co-creative multi-sensory consumer experiences in real life. As research in consumer psychology and marketing shows us this can have incredible effects on the consumer-brand relationship and obviously the bottom line. Look out for our Sense Reports (coming soon) explaining some of these effects.

See more at: http://stylepsychology.co.uk/digitalmultisensoryconsumerexperience/#sthash.5g0R3S0k.Or2f9K8J.dpuf