Magic Land DESIGN BIBLE NOW ONLINE!!

Dear users, our Magic Land Design Bible has now been made online for public viewing. Please download or save target the PDF file from the link below:

https://www.mixedrealitylab.org/media/projects/Magic_land_design_bible.pdf

A new form of interactive media, art creation and reception has been developed here at MXR Lab. People can be recorded in real time, and their small 3D images can be confronted in an embodied manner with 3d digital characters in the virtual world. Users can choose the pre-recorded people’s models and 3D virtual characters to build up a complete mixed reality interactive story or mini scenes. Especially, people can see the scenes on the real table and tangibly manipulate the virtual characters at the same time as human live characters. Our system merges the virtual world with the real world live humans.�

The system demonstates many technologies in human computer interaction: mixed reality, tangible interaction and 3D LIVE capturing and rendering.

First, users will enter to be captured in the recording room (Figure 1). The recording room is a 3m x 3m x 3m room. Once entering the room, there will an interesting voice instructing her to stand at the center of the room and press a button mounted on the wall to start the capturing. The user will then have a fun time being captured while on the outside, other participants can see inside the room through a monitor. This allows the participants on the outside to feel excited at seeing the person being captured live, who then appears on the interactive mixed reality table. Each capture will be twenty seconds long.

Figure 1: User is captured inside the recording room.

Figure 2: User selects virtual characters at the menu table.�

After being captured inside the system, they will then go to the Menu Table to select the virtual characters they want to play with (Figure 2). Most excitingly the user will see herself as a little 3d live character on the table, and feel thrilled. The selection of the characters is very simple made with two mechanical push buttons on the table. Each button corresponds with the two types of characters (virtual and 3d live captured). Users can press the button to change the objects showed on the Menu Table, and move the empty cup close to this object to pick it up. To empty a cup (trash), users can move this cup close to the virtual bin placed at the middle of the Menu Table. The user will feel a rush of amazement at being able to pick herself or her friends up in the cup.

After picking up a character (virtual character or 3d live human), the users can bring the cup to the Main Interactive Table to play with it (Figure 3). There are five cups in total, which ensures that multiple users can interact together in a social manner to create interesting and unique interactive stories. The main table is overlaid with a digitally created setting, in this case an Asian garden, and other digital characters like a dragon or a witch would interact with the player’s character. The player participates in these interactions by moving her “magic cup” in a tangible interaction and the responses would depend on the type of character that she is interacting with. There is also a large screen on the wall reflecting the mixed reality view of the first user when he/she uses the HMD, or showing the whole magic land viewed from a very far distant viewpoint when nobody uses the system. This allows many passers by to also understand the interactive system very quickly, and attract them to participate and be captured themselves as 3d characters to become part of the story.

Figure 3: Users are playing with virtual characters at the Main Interactive Table

Figure 4: Virtual characters interact with each other when they are close together.

Consequently, there will be many 3D models moving and interacting in a virtual scene on the table, which forms a beautiful virtual world of those small characters. If two characters are close together, they would interact with each other in the pre-defined way (Figure 4). For example, if the dragon comes near to the 3D Live captured real human, it will blow fire on the human. At the same time the sound of the dragon blowing fire will be heard. The participants will feel delight at the real time interactivity between the 3d live human characters and the mixed reality world. Furthermore this interaction is achieved by moving the objects tangibly with their hands. This gives an exciting feeling of the tangible merging of real humans with the virtual world to form new interactive stories.

Figure from 5 to 8 show some real image of our system. Figure 5 is a real example of two people who are being captured inside the recording room.

Figure 5: Two users are being captured in the recording room

Figure 6 is the real example of Menu table. In the left image, we can see a user using a cup to pick up a virtual object; at the edge of the table closest to the user are two mechanical buttons. In the right image we can see the augmented view seen by this user. The user had selected a dragon previously which is inside the cup. The giraffe is in the left menu for virtual characters while a boy is in the right menu for real captured persons. The two buttons change the characters on those two menus.

Figure 6: Menu Table: (Left) A user using a cup to pick up a virtual object (Right) Augmented View seen by users

Figure 6: Menu Table: (Left) A user using a cup to pick up a virtual object (Right) Augmented View seen by users

A real example of the tangible interaction on the Main Interactive Table is shown in the Figure 7. Here we can see a user using a cup to tangibly move a virtual panda object (left image) and using another cup to trigger the volcano by putting the character physically near the volcano (right image), an example of the physical interaction affecting the virtual scene to create interactive stories.

Figure 7: Tangible interaction on the Main Table:

Figure 7: Tangible interaction on the Main Table:

(Left) Tangibly picking up the virtual object from the table. (Right) The trigger of the volcano by placing a cup with virtual boy physically near to the volcano.

As another real example of this type of interaction can be seen in the Figure 8, we can see the interaction where the witch who is tangibly moved with the cup turns the 3D Live human character which comes physically close to it into a stone.

Figure 8: Main Table: The Witch turns the 3D Live human which comes close to it into a stone

Figure 8: Main Table: The Witch turns the 3D Live human which comes close to it into a stone

Software Architecture

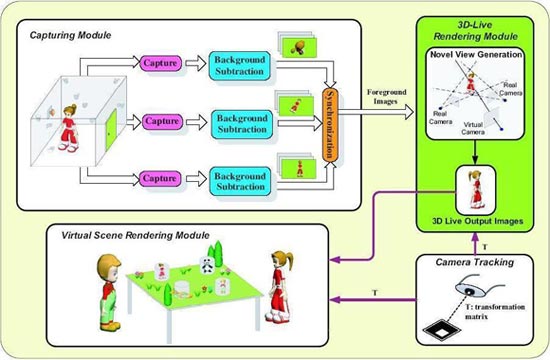

All basic modules and the processing stages of system are repre-sented in Figure 9. The Capturing and Image Processing modules are placed at each Capture Server machine. After Capturing module obtains raw images from the cameras, the Image Processing module will extract parts of the foreground objects from the background scene to obtain the silhouettes, compensate for the radial distortion component of the camera mode, and apply a simple compression technique.

Figure 9: Software Architecture

The Synchronization module, on the Synchronization machine, is responsible for getting the processed images from all the cameras, and checking their timestamps to synchronize them. If those images are not synchronized, basing on the timestamps, the Synchroniza;tion module will request the slowest camera to continuously capture and send back images until all these images from all nine cameras appear to be captured at nearly the same time.

The Tracking module will obtain the images from the camera mounted on the HMD, track the marker pattern and calculate the Euclidian transformation matrix relating the marker co-ordinates to the camera co-ordinates. Details about this well-known marker based tracking technique can be found at [ARToolkit], or [MXRToolkit].

After receiving the images from the Synchronization module, and the transformation matrix from the Tracking module, the Rendering module will generate a novel view of the subject based on these inputs. The novel image is generated such that the virtual camera views the subject from exactly the same angle and position as the head-mounted camera views the marker. This simulated view of the remote collaborator is then superimposed on the original image and displayed to the user.

Implications for the future

As a demonstration of Mixed Reality, 3d Live and Tangible Interaction technologies, the system shows our future vision of human-human communication, human-computer interaction as well as the future of interactive art and computer entertainment.

Imagine that you can see your distant friends in full 3D, standing in front of you in the real world, while talking with them over the phone, just like the image of the future presented in the holographic form of Princess Leia in Star Wars. 3D Live Human Magic Land demonstrates our advanced technology which can make can make this vision real. This technology can capture humans in 3D and placing them into a mixed reality environment at other places in real-time. It opens a new chapter for human communications where not only voice but also gesture and body motion is transmitted completely in full 3D.

With the potential of showing and interacting with virtual characters in our real environment in full 3D, Magic Land also opens a new chapter for interactive art. We can perceive 3D Live Human Magic Land as an experimental laboratory that can be filled with a wide range of artistic content, which is only limited by the imagination of the creators. The ability for the creator to make a recording of herself and watch herself acting in 3D with other objects leads to a special kind of symbiotic self reflection and allows our own interactive stories to be viewed in full 3d, now and in the future. The future is also important as it allows intergenerational 3d communication. We can imagine our grandmother talking to us as a young girl in our physical and mixed reality space, allowing us to feel a true affinity of how it was that our grandmother looked, moved, and gestured many years before. Our system enables a new form of art creation and reception that generates an intimate situation between the artist and the audience, and brings together the processes of creation, acting and reception in one environment. It allows the creation of cultural interactive stories on a mixed reality table space.

The significance of Magic Land is not only in art but also in computer entertainment. It is an unusual demonstration of the use of mixed reality, tangible interaction and 3D Live technologies in 3D interactive computer games. We believe that mixed reality technology will be the future technology of 3D interactive games, in which virtual environment and virtual characters are merged into our real world in full 3D. 3D games will be displayed in our real 3D space, not limited to be displayed with 2D screens and panels anymore. Moreover, instead of using external input devices, such as gamepads or keyboards, to navigate inside the 3D environment, which is necessary when users play 3D games with 2D monitors, they will be able to do it easily by moving their heads naturally to look at the virtual scene in the mixed reality environment at any angles. Tangible interaction will also help players interact with and control virtual characters in 3D games in a very natural and easy manner as if they were playing with real physical toys. Mixed reality and tangible interaction have great potential to become a worldwide entertainment form for families all over the world.

The 3D Live technology in Magic Land also opens a new trend of 3D interactive games. The player now can become a new character in the game story and can merge themselves in the virtual environment. Players can act and interact with other virtual characters and the story of the game will follow the way they deal with the characters. Instead of using a main virtual character and controlling it throughout the game, RPG game players now can really play the role of the main character and explore the virtual world themselves as a live 3d human in that virtual world. This can be real if the player inside the 3D Live recording room is immersed totally in the virtual environment by wearing an HMD with the view of the main virtual character, whereas the players outside view and construct the virtual scene on the table to challenge the main character. This new form of entertainment would be really needed for human’s endless creativity in art and 3D entertainment.

We believe that Magic Land will provide a framework for many breakthrough and pioneering human computer communication and interaction technologies in the future. These will have a great impct on art, entertainment, and cultural heritage. We feel the following scenarios are possible:

- Holo-phone technology: With this technology, users can see the 3D holographic form of the remote participants in the real environment when talking with them over the phone, from anywhere in the world. This is just like the way Princess Leia communicated in Star Wars.

- 3D Books: When reading this book, users can see 3D illustrated fiugres on the actual pages, moving and gesturing. For example we could read a book about Michael Jackson, and see him dancing on the pages.

- Education: With mixed reality and 3D Live, the new form of media will be created and replace the traditional 2D media in education. For example, students can see in their books the real images of Chinese people at the Great Wall in China in full 3D. Moreover, they can stay at home and watch their teacher live and in 3D, and attend the class as if they were really there.

- Entertainment: Users do not need to go to the live show of a famous singer, orchestra, or ballet dancer, but they still can watch this show in 3D form at home, as if the performer was really there in full 3D.

- Computer games: In the new generation of computer games, users can see 3D figures move around in the actual physical world, and can actually play a role in the game. Furthermore, they can play with their distant friends and see their friends’ images in the real physical environment in full 3D.

- Sport: Currently, through television, sport events such as football matches, Olympic Games, can be watched live but only in 2D. In the future, with 3DLive technology, users can watch these events in full 3D as if they were really at the places. They can have the full view of a soccer match, volleyball or basketball, etc., on their table in 3D.

- Medical collaboration: A group of doctors are sitting around a boardroom and watching -“live” or “recorded”- a complex heart surgery conducted at another place. They can observe the operation in 3D from any angle, and observe the patient from the inside using 3D captured data.

- Military: In military training, wearing HMDs, soldiers can see their virtual enemies appeared around them as if they were real. In the real battle, soldiers can view the map through a Head Mount Display, and also see their captain command them in full live 3D.

Video Documentation

Magic Land

Exhibition History Planet Games exhibition at Singapore Science Center, 09/12/2004 Permanent exhibition at Singapore Science Center, 1/12/2005 Interactivity Venue of SIGCHI Conference, Portland, OR, USA, April 02-07, 2005 WIRED NextFest exhibition at Chicago, USA, June 2005 3D Play about Mozart’s life: Project at Vienna, Austria, November 2004 Team Ta Huynh Duy Nguyen, Tran Cong Thien Qui, Ke Xu, Dr. Adrian David Cheok, Sze Lee Teo, Zhi Ying Zhou, Ashita Mallavaarachchi, Lee Shang Ping, Liu Wei, Hui Siang Teo, Le Nam Thang, Yu Li, Hirokazu Kato